Projects

Here are some projects — small & large, scientific & artistic — that I've fooled around with in my spare time. I hope you find them to be some combination of useful, interesting, and diverting.

Woodworking

I've been teaching myself woodworking in my spare time. You can see some of things I've built here.

False Leveler:

Artificial histogram matching

False Leveler is a Processing program I created to do histogram matching with random, artificially-created destination histograms. Rather than repeating what I wrote on the project's page, I'll just show you some examples.

lldist.rb:

Calculate the distance between latitude/longitude pairs

This is such a minor thing that it doesn't rise to the level of "project." I've moved it to a blog post instead of describing it here.

Roy's Mirror –or– Through a Glass, Dithered

This is a simple animation I whipped up in Processing based on Roy Lichtenstein's series of mirror paintings.

This page has a little more background, the complete code, and an version which will run in your browser using your webcam. (For certain browsers, anyway.) If the animation doesn't work in your browser, here's how it looks:

TwiGVis: Twitter Mapping

TwiGVis (TWItter Geography VISualizer) is a program I created to visualize all of the tweets my research group had collected durring Hurricanes Sandy and Irene. It will give you both still images, like below, and video output.

Later I modified it to also display data from the Red Cross on their mobile donations campaign.

Though I initially created it only for internal use in my lab, we received such good feedback at some talks that I've posted the code online. You can download the code and sample data here. Use and modify it as you wish, according to a GPL license. Read more about the project and see more examples here. I hope some of you find this useful.

Happy mapping!

gifcrop.sh

(I've decided that this isn't complicated enough to be called a project, so I've be put up a blog post about it instead.)

Reel Shadows: Boid Animations

This is an abstract algorithmic animation project I've been working on. In a nutshell, it's my interpretation of what would happen if you projected a movie onto a flock of birds instead of a screen.

You can read about my methods and the videos that inspired it as well as see some final renders here. This video should give you a flavor.

Monet Blend

Generally speaking, I don't see in color well. Not that I'm color blind. But when I look at a scene I notice shape and form much more than I notice color. When I was a child my art teacher had to coax me into bothering to color anything in; I was satisfied with line drawings. I spent a lot of time designing and making paper models, but always out of plain, unadorned white cardboard.

There are exceptions to this preference for space over color. Monet, Turner and Rothko all make me sit up and notice color. I especially like the various series Monet did of the same scene from the same vantage point under varying conditions. I love seeing multiple works from a series side-by-side in galleries so I can compare them.

This project was born out of the desire to be able to look at multiple pieces in the same series of Monet paintings at the same time. Swiveling my head back and forth rapidly only works so well, and it earns me extra weird looks from other patrons. (Plus, I feel like I need to correct my weakness w.r.t. to color, and if I'm going to learn about color I might as well learn from the best.)

What I've done is write a program which loads two Monet images and blends them together. Just averaging the two would create a muddled, uninspired mess. So I use a noise function to decide at each pixel how much to draw from image 1 and how much from image 2.

You can see an example of this blending matrix above. Darker pixels in the blending matrix will have a color more similar to "Sunset, Pink Effect," while lighter pixels are closer to "Hazy Sunshine." A pixel which is exactly 50% (halfway between white and black) will be given a color halfway between the color of the corresponding pixel in each image.

The blending matrix is a function of time, so the influence of each source image over the output changes over time, allowing me to see different parts of each source image over time.

By changing parameters I can control how smooth or muddled the noise is, how bimodal the distribution is, how fast it moves through time/space, etc.

Currently I'm using a simple linear interpolation between the two source images, which is then passed through a sigmoid function. There are at least a dozen other ways I could blend two colors. I need to explore them more thoroughly, but from what I've tried I like this approach. It's conceptually parsimonious and visually pleasing enough.

The examples above show colors interpolated in RGB space. The results are good, but can get a little wonky if the two source colors are too dissimilar. Interpolating between two colors is a bit of black magic. AFAICT there is no one gold standard way to go about it. I've tried using HSV space but wasn't too pleased with the results. After that I wrote some code which does the interpolation in CIE-Lab color space. I think the results are very slightly better than RGB, but it's difficult for me to tell. I'll render out a couple of examples using that technique and maybe you can judge for yourself.

If I wanted to get sophisticated about this I should also write in a method to do image registration on the sources images. I have another semi-completed project which could really use that, so once I get around to coding it for that project I'll transfer it over to this one as well. (Although that other project needs to do it on photographs, and this on paintings, and the latter is a lot trickier.)

Diffusion Patterns

This is an animation process inspired by Leo Villareal's and Jim Campbell's work with LEDs.

Since I have neither the money nor living space for hardware, I'm settling for this software version.

A grid of nodes is initiated with random wirings between adjacent nodes. Each node is given a fixed amount of charge, and the charge flows between wired nodes over time. At each time step a random number of wires are created or destroyed, which keeps the system from settling into a fixed state.

I use a variation of Blinn's metaball algorithm to render each node, which I know isn't a great match for the look of LEDs when it comes to photorealism, but I like it for this purpose and I've been looking for an excuse to play around with metaballs anyway.

Metaballs are typically coded to have constant mass/charge/whatever and varying location. I've flipped that so their charge is variable and location is constant. Visually I think it's actually a pretty good match for the things Jim Campbell does with LEDs behind semi-opaque plexiglass sheets. (Or could be if I tweaked it with that in mind as a goal.)

I'd like to take this same rendering process and use it for a lot of other processes besides the charge-diffusion algorithm that's running in the background here.

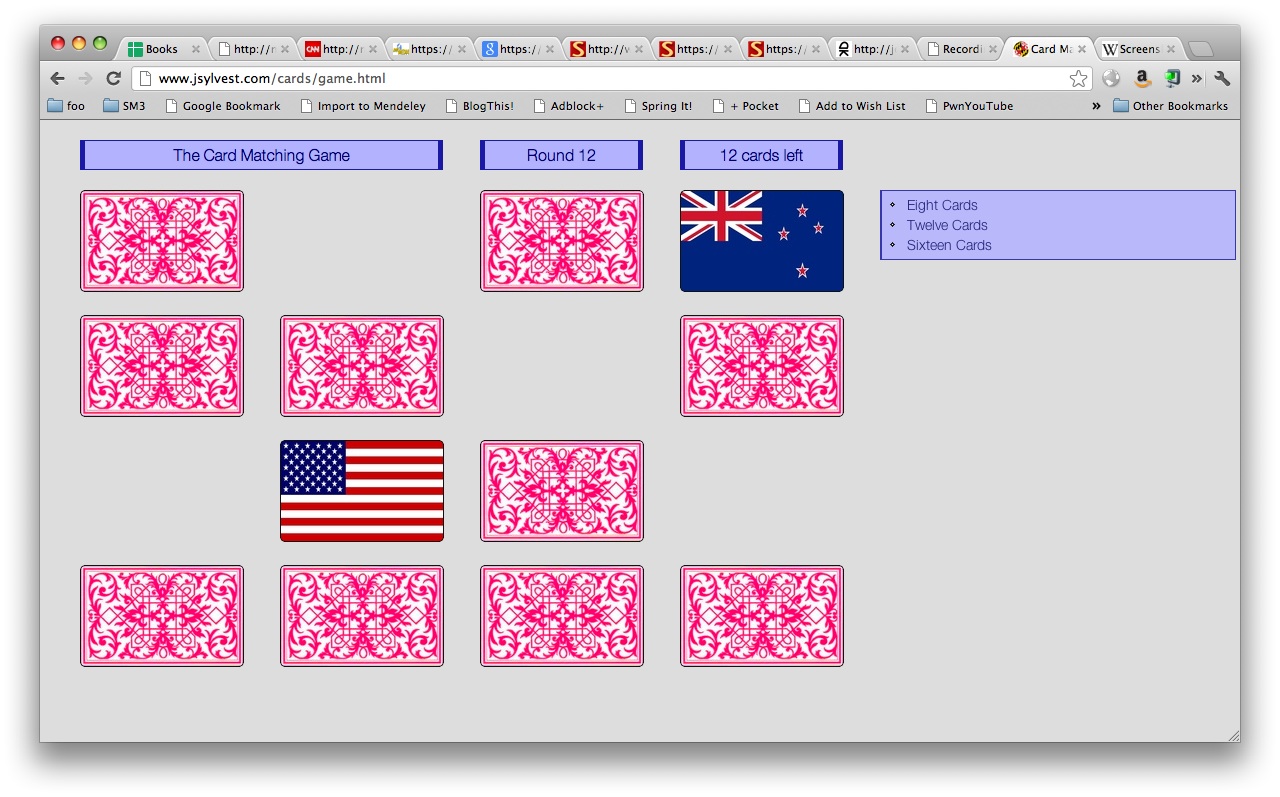

Card Matching

As part of my research, I built a neural network system to play a memory game you might have played as a child. It's variously known as "Concentration," "Memory," "Pelmanism," "Pairs" and assorted other names. The basic idea is that several pairs of cards are face-down on a table, and you have to find all the pairs by turning over two cards at a time.

I wanted to compare my system's performance to that of humans, but didn't have any information about human performance to use as a benchmark. To clear that hurdle, I built an online version of the game for people to play so I can record their behavior. You can play my card matching game by going here.

In addition to collecting data for my research, coding this game also gave me an excuse to learn jQuery, which was nice. I want to go back in time to 2001, when I was grappling with cross-browser dHTML and give myself a copy of jquery.js.

WynneWord Crossword Generator

Way back in undergrad, one of my assignments was to write a program to automatically generate small crossword puzzles. (Just the grid of letters, not the associated clues.) I liked the solution I came up with then, but was consistently frustrated that I had only a few days to work on it. Ever since I've wanted to go back and do it right.

WynneWord is a small attempt to solve that problem better than I had time to then. (Of course I still don't have as much time as I wish to improve this.) One of the improvements over the approach I used in school is to store all the potential words in a trie. When I actually wrote the code for to do that I had no idea such data structures had an actual name. It wasn't until years after I had written the code out on paper and then later still coded it up that I realized I had stumbled upon something that already existed.

I'm waiting to post code and sample results until I find time to make at least one of the following improvements:

- Build a much larger corpus of words and especially phrases and proper nouns to draw from.

- Improve the back-tracking system so that ... you know what? I can't really explain this without a ton of background. For now I'll just leave it at "improved back-tracking."

- Parallelize part of the search procedure. (Although I don't think this problem lends itself terribly well to parallelization, there are parts of it that could potentially benefit.) This would mostly be an excuse to re-learn parallel programming, which is something I haven't needed to do in 5+ years.

13 13 ...##....#... .....#...#... ......#...##. ###...#...... ..#...###...# ..#.#....#..# .....###..... #..#....#.#.. #...###...#.. ......#...### .##...#...... ...#...#..... ...#....##...

*************** *BAR**AREN*ART* *ARENA*ORE*RAI* *RANGER*AIR**M* ****URE*SLATES* *AR*NOT***NET** *LA*I*IDLE*AH** *LINSE***REMIC* **NE*LIMB*A*CA* **IRA***ART*ST* *SEDUCE*ROA**** *I**LAN*RUGGED* *NOT*REE*TERRI* *GRO*EARL**OOP* ***************

*************** *SAN**ORTH*SAN* *ERASE*ARA*ARA* *EIGHTH*INC**S* ****ATE*EDITOR* *AR*RAN***ARA** *RA*P*NINE*AX** *INDEX***RECAP* **KI*IMAM*A*CO* **INC***ALT*AL* *SNEEZE*RAM**** *A**LAN*TSAKOS* *LEA*REA*KNOTT* *ARI*RAND**OTA* ***************

(Yes, some of the words are weird. That's a fault in the ad hoc corpus WynneWord currently reads as input, not the program itself. I think a lot of the words are drawn from financial reports and the Enron emails, which explains why there are a lot of obscure company names and various financial acronyms in my results.)

Soon I hope to post more examples, some discussion of my technique, and the code used.

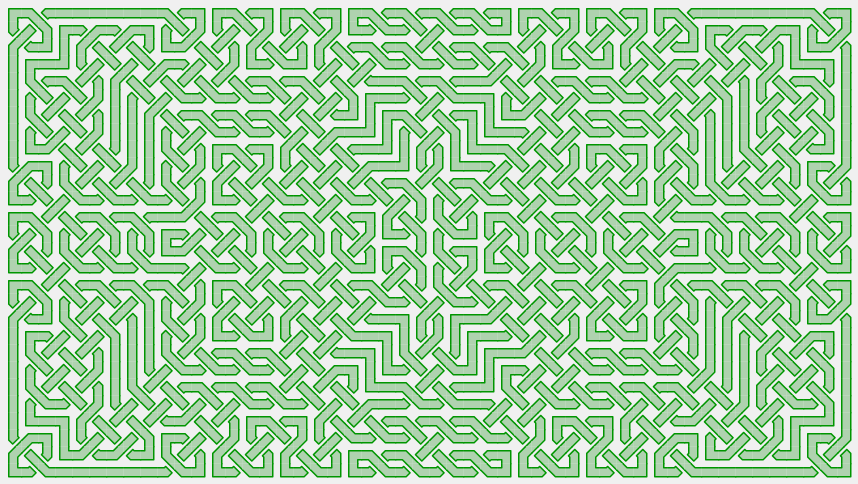

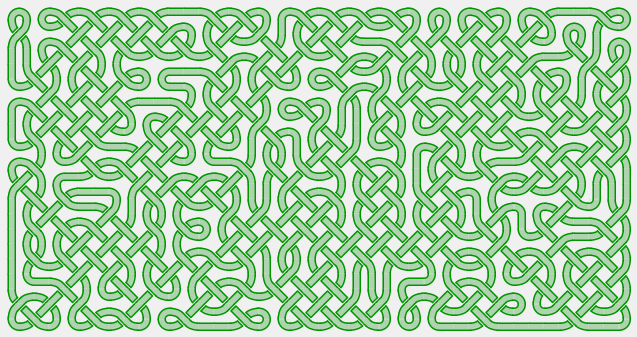

Celtic Knot Animations

I wrote some code to generate Celtic knotwork patterns.

Music by Flatwound, "The Long Goodbye."

Latex Asterisms ⁂

(I've decided that this isn't complicated enough to be called a project, so I've be put up a blog post about it instead.)

Noise Portrait

To be added.

paletteSOM

To be added.